Cisco’s Model Provenance Kit generates fingerprints for AI models to track lineage, detect tampering, and verify claims about model source and vulnerabilities.

Cisco has unveiled a new open-source tool called Model Provenance Kit, designed to help organizations address potential security and compliance issues associated with using third-party AI models. As organizations increasingly leverage models from repositories like HuggingFace, where millions of models are available, they often lack visibility into changes made to those models or the accuracy of claims about their source, vulnerabilities, and training biases.

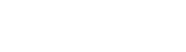

The Python-based toolkit and CLI addresses these issues by generating a fingerprint for each model based on metadata signals, tokenizer similarity, and weight-level identity signals including embedding geometry, normalization layers, energy profiles, and direct weight comparisons. The tool has two modes: compare, enabling users to compare two models to identify shared lineage; and scan, which attempts to find the closest lineage for a given model by comparing its fingerprint against a database compiled by Cisco.

Cisco notes that vulnerabilities in third-party models can propagate whether affecting an internal chatbot, an agent application, or a customer-facing tool. Without provenance, organizations have no way to trace an incident back to its root cause or determine which other models in their stack are affected. As models are continuously fine-tuned, distilled, merged, and repackaged, lineage becomes harder to track and easier to obscure.

The open-source Model Provenance Kit is available on GitHub, and Cisco’s dataset of base model fingerprints is available on Hugging Face.

Source: SecurityWeek — Cisco Releases Open Source Tool for AI Model Provenance