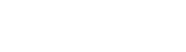

Palo Alto Networks demonstrated how Vertex AI agents could be turned into ‘double agents’ with excessive P4SA permissions enabling data exfiltration and backdoor creation.

Palo Alto Networks has shared details about how its researchers weaponized AI agents built on Google Cloud’s Vertex AI development platform, demonstrating that these agents could be compromised by attackers and turned into double agents enabling data exfiltration, backdoor creation, and infrastructure compromise.

The research focused on the Vertex Agent Engine and Agent Development Kit, finding that the Per-Project, Per-Product Service Agent (P4SA) has excessive permissions by default. The researchers showed these permissions could be abused to obtain a GCP service agent’s credentials and leverage them to move from the AI agent’s execution context into the owner’s project and associated data storage. An attacker could use compromised P4SA credentials to gain unrestricted access to the Google project hosting Vertex AI, download container images from private repositories, access restricted Artifact Registry repositories, and manipulate files for remote code execution within the agent’s environment.

The researchers warned that gaining access to private container images not only exposes Google’s intellectual property but also provides an attacker with a blueprint to find further vulnerabilities. They also found that Google Cloud Storage buckets containing potentially sensitive information could be accessed through the same compromised credentials.

Google has addressed the issue by revising documentation to point out potential risks and recommends using Bring Your Own Service Account (BYOSA) to enforce least-privilege execution, granting the agent only the permissions it requires to function. Google also noted that strong, non-overridable controls are in place to prevent service agents from altering production images.

Source: SecurityWeek — Google Addresses Vertex Security Issues After Researchers Weaponize AI